L'improvisation peut être considérée comme une force motrice majeure dans les interactions humaines, stratégique dans tous les aspects de la communication et de l'action. Dans sa forme la plus élevée, l'improvisation est un mélange d'actions structurées, planifiées, dirigées, et de décisions et déviations locales difficilement prévisibles optimisant l'adaptation au contexte, exprimant de manière unique le moi créatif, et stimulant la coordination et la coopération entre les agents. La mise en place d'environnements homme-machine puissants et réalistes pour l'improvisation nécessite d'aller au-delà de la simple ingénierie logicielle des agents créatifs avec des capacités d'écoute et de génération de signaux audio, comme ce qui a été fait la plupart du temps jusqu'à présent. Dans les nouvelles configurations d' « interréalité physique » (un schéma de réalité mixte où le monde physique est activement modifié par l'action humaine), les sujets humains sont immergés et engagés dans des actions tangibles permettant une pleine incarnation (embodiment) dans les monde numérique, physique et social.

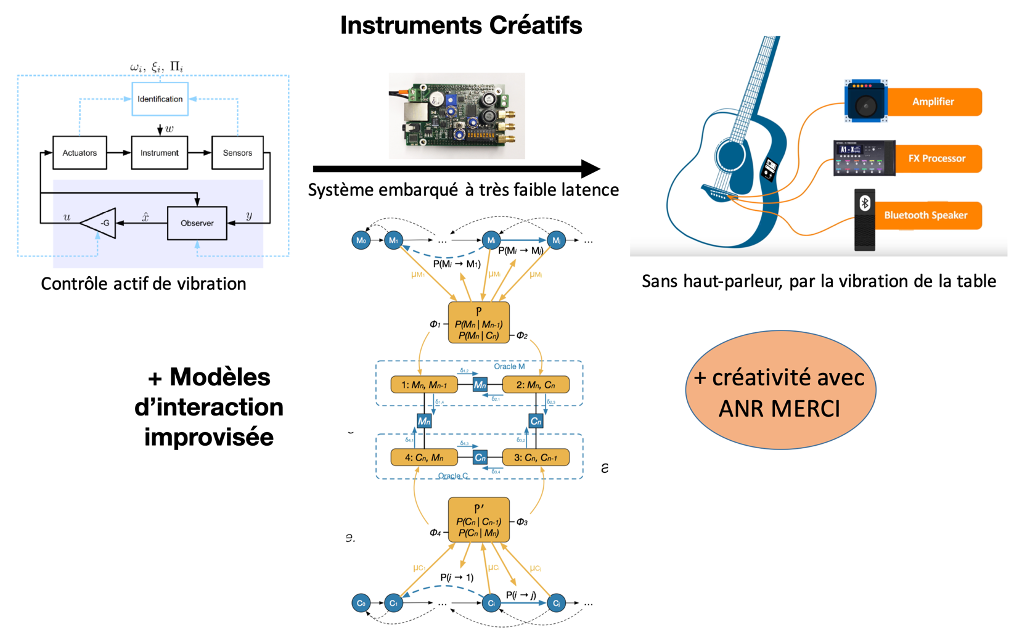

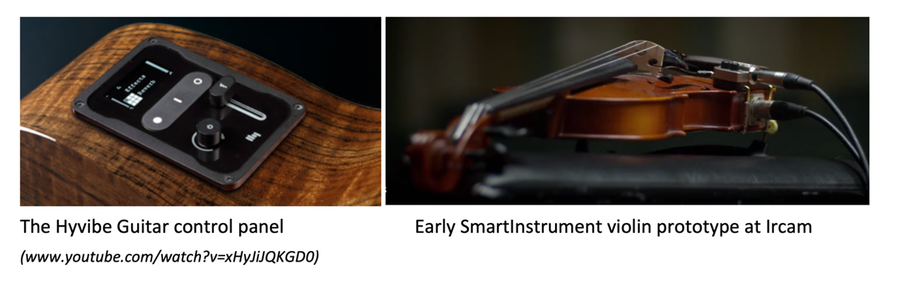

L'objectif principal de ce projet est de créer les conditions scientifiques et technologiques pour des systèmes musicaux à réalité mixte, permettant des interactions improvisées homme-machine, basées sur l'interrelation d'agents numériques créatifs et le contrôle acoustique actif dans les instruments de musique. Nous appelons ces dispositifs de réalité mélangée des instruments créatifs. L'intégration fonctionnelle de l'intelligence artificielle créative et du contrôle actif de l'acoustique dans le cœur organologique de l'instrument de musique, afin de favoriser des situations d'interréalité physique plausibles, nécessite la synergie de recherches publiques et privées hautement interdisciplinaires, telles qu'elles sont menées par les partenaires. De tels progrès seront susceptibles de perturber les pratiques artistiques et sociales, et d'avoir à terme un impact puissant sur l'industrie musicale ainsi que sur les pratiques musicales amateurs et professionnelles.

Un instrument créatif sera capable d'écouter en permanence la performance du musicien qui en joue, afin de déterminer ses orientations musicales, et de dialoguer de manière créative avec lui dans une expérience inédite de réalité musicale mixte où les sons produits par l'interprète et ceux créés artificiellement se mélangeront dans une polyphonie musicale significative. Cette réalité musicale mixte se déploiera dans les dimensions mélodique, harmonique, rythmique et orchestrale de la musique, avec un compagnon musical créatif situé au cœur même de l'instrument et agissant comme un avatar artistique, un assistant à la création, un partenaire, une incitation nouvelle à apprendre, à pratiquer et à communiquer dans le langage musical.

Équipe Ircam : Représentations musicales